By Charlotte French, Business Development Manager at fonemedia & Solutions by fonemedia

I recently joined Kyle Campbell and Jonny Corless live on LinkedIn to talk about the growing gap between how universities present themselves online and how AI actually finds and recommends them to students.

It was a wide-ranging conversation, and I wanted to pull some of the key threads together here for anyone who couldn’t make it, or who wants to sit with the ideas a little longer.

The discovery journey has already changed

Let’s start with the reality. 38% of UK students are now explicitly using AI to research universities, and that figure is growing at over 40% year on year. This isn’t early adoption. It’s mass adoption, and it’s happening right now, in this Clearing cycle.

What it means in practice is that AI is moving the discovery process upstream. Students are forming shortlists before they ever reach Google, let alone a university’s website. By the time they click on a paid search ad or land on a course page, many have already made provisional decisions based on what an AI told them. Search ads and organic results are increasingly acting as confirmation channels. For universities that have historically leaned heavily on paid search to drive Clearing enquiries, that distinction matters.

Visibility and sentiment are not the same thing

One of the most useful frameworks Jonny shared (and it’s central to the Iff Digital GEO Education Index) is the distinction between visibility and sentiment. You can appear frequently in AI-generated answers and still lose ground if the way you’re being discussed isn’t favourable. Equally, a university with lower overall visibility can punch well above its weight when the sentiment around it is consistently strong.

The Index, which analysed 10 Yorkshire-based universities across 5,000+ education-specific AI prompts, found that Russell Group institutions hold a significant structural advantage in AI search, not because they’re necessarily better, but because decades of research output, media coverage and third-party citations have built a rich offsite profile that AI draws on heavily. Leeds Trinity is a striking example of what’s possible on the other side of that equation: it scored 100% AI favourability, higher than the University of Leeds itself. Prestige bias is real, but it is beatable.

Where strengths are being hidden

The Index also surfaced something that should concern any university marketing team: strong institutions are routinely invisible on the very things they’re best known for.

One prompt tested was “What are the best UK universities for internships and work placements integrated into degrees?” Leeds Beckett (for whom industry placements are a genuine institutional strength) returned at 18th position. The University of Leeds came in at 12th. The AI had no reliable way to distinguish the two on that specific strength, because Leeds Beckett’s content wasn’t structured to make that case.

Three things drive this kind of invisibility. First, content buried in PDFs: LLMs struggle to scrape them, so anything housed in a PDF is effectively invisible to AI regardless of how compelling it is. Second, missing facts and USPs: AI looks for qualified claims, data points and specific features to form its responses, aspirational marketing copy won’t get cited. Third, unstructured data: the traditional wall of prose that accompanies most course pages is no longer sufficient. AI processes well-structured content far more effectively.

What you can actually do about it

The good news is that sentiment, unlike citation volume, is genuinely something every institution can improve, regardless of size or heritage.

On your own site, the priority is rewriting course pages to lead with specific, crawlable facts: graduate outcomes, salary data, accreditations, entry requirements. Build dedicated pages that directly answer the questions students are typing into ChatGPT and Gemini. Implement schema markup. Make sure your EEAT signals (author profiles, staff pages, awards) are visible and up to date.

Off-site, it means being intentional about where your institution’s voice exists across the web. Student review platforms, subject-specific rankings, sector publications, Reddit, LinkedIn, all of it contributes to the profile AI draws on. Internal satisfaction scores that never make it onto public platforms might as well not exist, as far as AI is concerned.

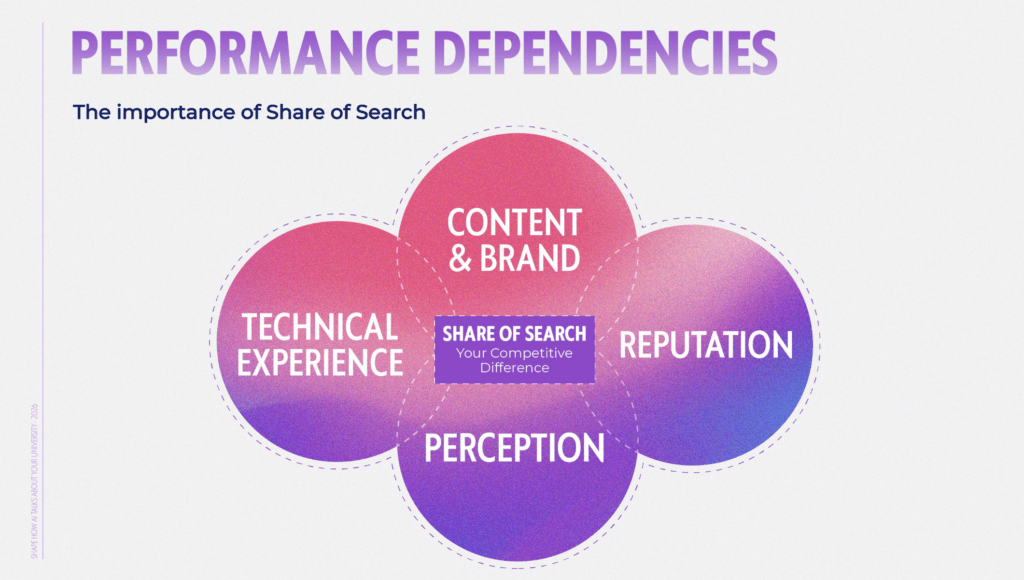

Measuring what matters

The measurement model needs to evolve alongside all of this. AI sentiment quality, citation frequency by topic, enquiry quality, and share of voice in the subjects you want to own, these are the metrics that will tell you whether your strategy is working. Raw traffic figures alone won’t.

If AI is pre-qualifying applicants more effectively, you should expect to see better-matched enquiries and faster conversion velocity, even if overall session numbers fall. Building the reporting infrastructure to actually see that is where a lot of teams need to start.

We’ve pulled the key thinking from today’s session, alongside the mobile marketing picture for Clearing, into a practical guide you can take back to your team:

Download the Clearing 2026: Smarter Student Recruitment Playbook

Sources: Iff Digital GEO Education Index, 2025; HEPI/Kortext Student Generative AI Survey 2025 (1,041 UK undergraduates, Savanta, Dec 2024).